technology

Advances in neural networks

A

admin

November 27, 2023

7 min de lecture

Long gone are the days when the machines were associated merely with mechanical tasks. With the advent of neural networks, machines are no longer just tools – they have become learners, capable of recognizing patterns, making predictions, and adjusting their behavior based on data inputs. This article will delve into the fascinating world of neural networks, providing a comprehensive overview of their components, operation, and modern advancements.

Understanding the Basics of Neural Networks

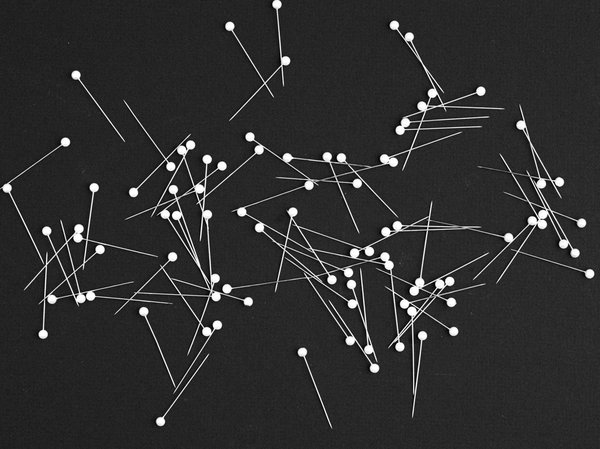

At the heart of the concept of a neural network lies the inspiration drawn from human cognition and brain function. Neural networks, often referred to as artificial neural networks (ANNs), are algorithms designed to mimic the structure and function of the human brain’s neurons. A single neuron within an ANN is a mathematical function that emulates the behavior of a biological neuron, processing incoming data and passing it on to other neurons.

These networks are composed of multiple interconnected layers of neurons, with each layer performing a specific function. The input layer receives data, the hidden layers process it, and the output layer generates final results. The real magic happens in the hidden layers, where the network learns the intricacies of the data patterns, enabling it to make predictions or classifications.

Deep Learning: A Revolution in Neural Networks

In the quest for more sophisticated and accurate machine learning models, researchers have turned to deep learning. Deep learning is a subset of machine learning that uses neural networks with several hidden layers. These deep networks can model complex patterns in datasets using a deep graph with several processing layers, composed of multiple linear and non-linear transformations.

Deep learning has shown remarkable success in a range of fields, from image and speech recognition to natural language understanding. It has been instrumental in developing algorithms that can identify objects in images, translate languages, and even generate human-like text. The depth of these networks makes them capable of identifying patterns in large datasets that would be nearly impossible for a human to see.

Training Neural Networks: An Intelligent Machine’s Schooling

To function effectively, neural networks require training. This involves providing the network with a large amount of data so it can learn the relevant patterns and behaviors. The process of training a neural network is iterative, meaning that the network learns gradually over time, much like how a child learns math.

During the training process, the network makes predictions based on the input data. These predictions are compared with the actual output, and the difference, or ‘error,’ is calculated. This error is then fed back into the network, adjusting the weights of the connections between neurons – a process known as backpropagation. Over time, the network reduces the error and improves its prediction or classification capabilities.

Neural Networks in Action: Real-World Applications

Neural networks have paved the way for numerous breakthroughs and have been deployed in a myriad of applications. In healthcare, they are used to predict diseases, analyze medical images, and aid in drug discovery. In finance, they are used for credit scoring, algorithmic trading, and fraud detection. In the automotive industry, they play a crucial role in the development of autonomous vehicles, enabling them to recognize traffic signs and make decisions.

Furthermore, they power the recommendation systems of many online platforms like Netflix and Amazon, helping to personalize user experience by predicting user preferences based on past behavior. With the rise of big data, the applications of neural networks are expanding rapidly, continually pushing the boundaries of what machines can do.

Future Trends: What Lies Ahead for Neural Networks

The field of neural networks is constantly evolving, with new research and developments promising more refined and powerful models. One such promising trend is the development of spiking neural networks, which more accurately mirror the behavior of human neurons. These networks could potentially revolutionize the way ANNs are designed and function, leading to more energy-efficient and capable models.

Another trend is the growing integration of neural networks with quantum computing. Quantum neural networks could leverage the power of quantum bits (qubits) to process information in ways that traditional neural networks cannot, potentially leading to an exponential increase in the speed and efficiency of data processing and machine learning models.

In conclusion, neural networks represent a paradigm shift in computing, offering a new approach to data analysis and machine learning. As research progresses, these networks will continue to evolve, increasing in complexity and capability, and expanding the horizons of artificial intelligence.

Enhancing Efficiency with Spiking Neural Networks

One of the most exciting advancements in the field of neural networks is the emergence of spiking neural networks. Unlike their predecessors, spiking neural networks emulate the precise timing of the biological neurons’ action potentials. This approach is a significant step towards creating more biologically plausible models of neural processing, which can potentially lead to more efficient and accurate networks.

Biological neurons communicate with each other through short electrical impulses, or ‘spikes.’ Spiking neural networks attempt to mimic this behavior by incorporating time into their functioning, creating a more dynamic and realistic model of neural communication. This addition of a temporal dimension allows these networks to encode and process information more efficiently, potentially leading to more accurate predictions and classifications.

Spiking neural networks also offer the potential for greater energy efficiency. In a traditional artificial neural network, each neuron’s activation requires a substantial amount of computational resources. In contrast, in a spiking neural network, a neuron only ‘fires’ or sends out a signal when a certain threshold is reached, significantly reducing the computational load.

As the field advances, researchers are actively exploring various methods to optimize these networks, including adjusting the synaptic weights, incorporating more complex neuron models, and developing new learning rules. According to a study indexed in Google Scholar and doi pmid, implementing more complex neuron models in spiking neural networks can greatly enhance their computational power.

Quantum Computing and Neural Networks: A Powerful Alliance

As technology progresses, quantum computing has shown immense potential to revolutionize various fields, including neural networks. This cutting-edge technology can process vast amounts of information simultaneously, making it a perfect candidate for enhancing the capabilities of deep neural networks. Quantum neural networks, which blend the fundamentals of quantum physics and neural networks, could offer unprecedented speed and efficiency in data processing.

In a quantum neural network, traditional neurons are replaced with quantum bits, or ‘qubits.’ These qubits can exist in multiple states at once due to a quantum phenomenon known as superposition, unlike binary bits which can only be in one state at a time. This allows quantum neural networks to process a large data set in parallel, potentially leading to exponential increases in speed and efficiency.

Quantum neural networks are also capable of quantum entanglement, another quantum phenomenon where the state of one qubit can instantly influence the state of another, regardless of the distance separating them. This property could further enhance the efficiency and accuracy of these networks, especially in tasks involving large-scale data processing.

While the integration of quantum computing with neural networks is still in its infancy, the potential benefits are enormous. With ongoing research, quantum neural networks could redefine the boundaries of artificial intelligence, making it more powerful and efficient than ever before.

Conclusion: The Future of Neural Networks

The world of neural networks has witnessed exponential growth over the years, transforming the realm of machine learning and data analysis. With the advent of deep learning, these networks have been able to model complex patterns, revolutionizing fields from healthcare to finance. The introduction of spiking neural networks has brought us closer to emulating the efficiency and dynamism of biological neurons.

As we look towards the future, the fusion of quantum computing and neural networks promises a new era of machine learning. Quantum neural networks, with their potential for unparalleled speed and efficiency, could redefine the way we process and interpret large-scale data.

These advancements are a testament to the dynamic nature of the field of neural networks. As our understanding deepens and technology evolves, these networks will continue to grow in sophistication and capability, pushing the boundaries of what is possible in artificial intelligence. The potential applications of these advancements are limitless, promising a future where machines can not only learn but also think and reason, much like their human counterparts.

technology

Voir tous les articles technology →